How to Monitor Competitor Prices Without Integrations

Competitor pricing changes daily. A product priced at $49 today may drop to $44 tomorrow, appear in a flash sale next week, and go out of stock shortly after.

For e-commerce businesses, these shifts directly affect conversion rates, margins, and market positioning. The companies that react fastest win.

The problem? Most businesses assume monitoring requires complex integrations — custom API connections, development sprints, ongoing maintenance. In reality, there's a faster path.

Why API-Based Integrations Hold You Back

The traditional approach sounds logical: connect to competitor systems via APIs, pull pricing data automatically, pipe it into your analytics stack.

But here's what actually happens:

Most competitor websites don't offer public pricing APIs. And when they do, access is restricted, rate-limited, or requires a commercial partnership.

Building integrations is expensive. Authentication, data normalization, infrastructure — and every time a competitor updates their platform, your pipeline breaks.

Maintenance never ends. Engineering teams end up spending more time fixing scrapers than actually using pricing data.

The result: months of work for a system that's perpetually one website redesign away from breaking.

The Better Approach: Direct Data Collection

Instead of connecting to systems, you monitor the publicly visible data that competitors already expose — product pages, category listings, search results, marketplace entries.

No partnerships needed. No API approval process. No internal integration.

Here's how it works in practice:

Step 1 — Define What You Want to Monitor

Start with a clear scope:

Which competitors matter most?

Which product categories or SKUs do you want to track?

How often do prices need updating — monthly, weekly, or daily?

This doesn't need to be exhaustive on day one. Start with your top 3 competitors and your 100 highest-revenue products. You can expand from there.

Step 2 — Collect Pricing Data Automatically

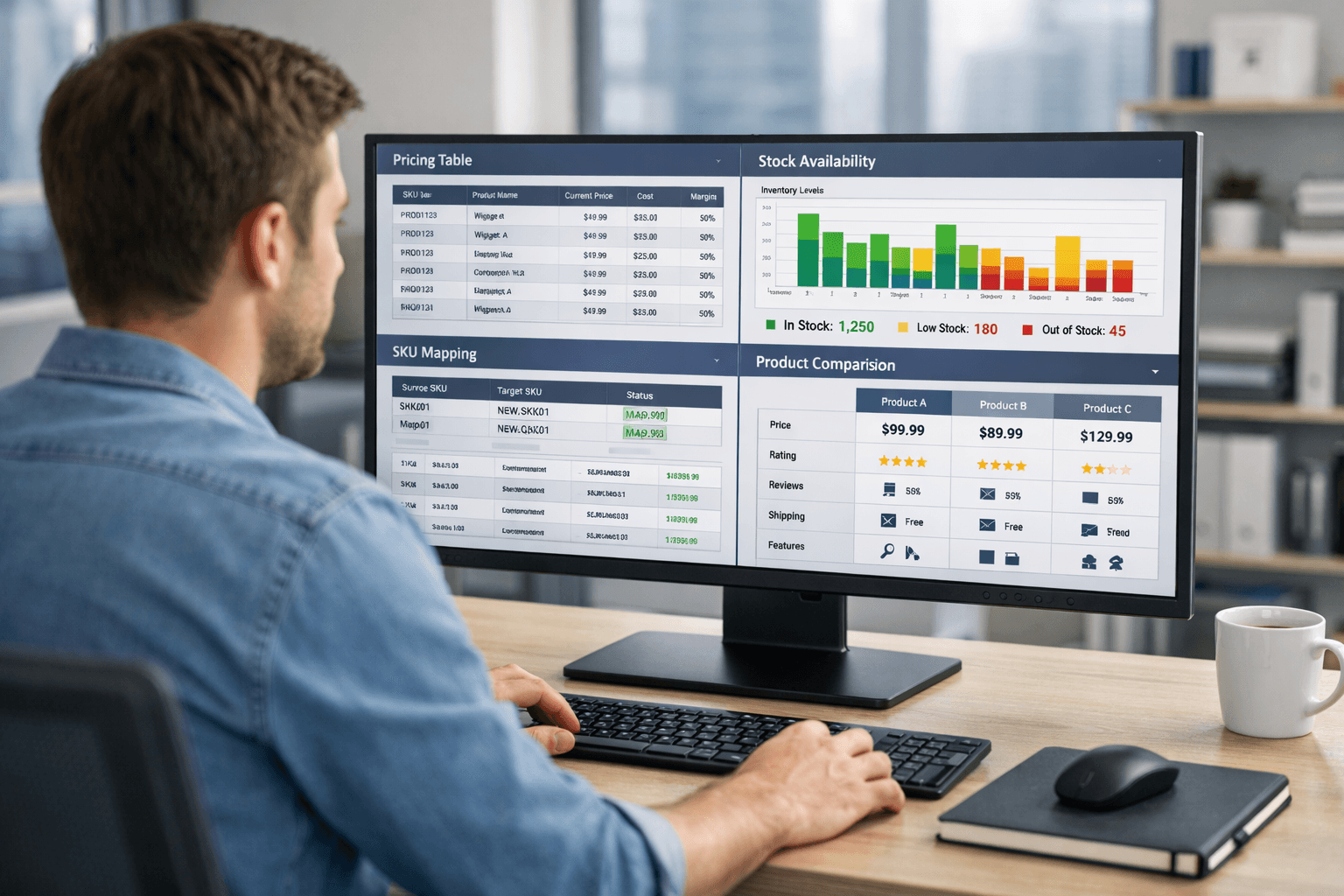

Automated extraction visits the target pages and pulls structured pricing information — current price, original price, discount status, availability, currency.

The key word is automatically. Manual checks are only viable at small scale. Once you're monitoring 10+ competitors across thousands of SKUs, you need a system that runs without human input.

What makes reliable collection difficult:

Modern e-commerce sites load prices via JavaScript after the initial page load — basic scrapers miss this entirely

Anti-bot protection (CAPTCHAs, IP bans, fingerprinting) actively blocks automated access

Page structures change constantly — a CSS class rename can silently break an extraction pipeline

Price displays vary: "From $X", "Was $X / Now $Y", tax-inclusive vs. exclusive, multi-seller marketplace listings

This is why building it in-house is harder than it looks. The first version works. Maintaining it at scale is the real challenge.

Step 3 — Normalize the Data

Raw extraction gives you numbers. Useful analysis requires consistency.

Normalization means:

converting all prices to a common format

separating base price from discount price

flagging out-of-stock items separately from priced items

Without this step, comparing prices across competitors is unreliable.

Step 4 — Deliver It in a Format You'll Actually Use

Pricing data is only valuable if your team can act on it quickly. That means delivery in formats that slot into your existing workflow — spreadsheets, dashboards, data feeds, or direct exports — without requiring a new internal tool to interpret.

From Data to Decisions

Once you have reliable, normalized competitor pricing data flowing in regularly, you can start doing the work that actually moves the needle:

Spot underpricing opportunities — products where you're leaving margin on the table

Catch competitor promotions early — before they affect your traffic

Set dynamic pricing rules — automatically adjust prices based on competitor behavior

Track trends over time — understand seasonal patterns and promotional cycles, not just today's snapshot

The difference between companies that use pricing data well and those that don't isn't access to data — it's the reliability and freshness of that data.

Why Most In-House Efforts Stall

Teams that build competitor monitoring internally tend to hit the same ceiling: the system works for a few months, then gradually degrades as websites change, anti-bot measures tighten, and nobody has time to maintain it.

The engineering cost compounds. What starts as a two-week project becomes a permanent maintenance burden.

The practical alternative is to use infrastructure that's already built for this — where extraction, rendering, proxy management, and normalization are handled for you, and you receive clean, structured datasets on a reliable schedule.

How ShopScraping Fits In

ShopScraping is built specifically for this use case: competitor price monitoring without the integration overhead.

You define the products, categories, or URLs you want to track. We handle the extraction — including JavaScript-rendered content, anti-bot environments, and constantly changing page structures. You receive structured pricing datasets, updated automatically, ready for analysis.

No scraping infrastructure to build. No proxies to manage. No breakage to fix when a competitor redesigns their product page.

The output works with tools your team already uses — including direct Excel exports for teams that prefer to work in spreadsheets.

If you're currently monitoring competitors manually, with fragile in-house scripts, or not at all — get started with ShopScraping and have your first dataset running within a day.

Summary

Monitoring competitor prices without integrations is not just possible — it's the more practical approach for most businesses.

The path is straightforward:

Define what you want to monitor

Collect pricing data automatically from public pages

Normalize it into a consistent, comparable format

Deliver it in a format your team can act on immediately

The complexity isn't in the concept. It's in executing steps 2 and 3 reliably at scale — and keeping them working as competitor websites evolve.

That's exactly the problem ShopScraping solves.