Build vs Buy: Do You Really Need to Build Your Own Competitor Data Pipeline?

In competitive e-commerce markets, pricing decisions depend on having up-to-date visibility into competitor prices. As a result, many companies consider building internal systems to collect and track competitor data.

At first glance, this seems straightforward: visit competitor websites, extract prices, and store the data for analysis.

In practice, this is where complexity begins.

This raises a key question:

Should you build your own data collection system — or rely on external data sources?

What You Actually Need for Competitor Price Monitoring

When companies think about price monitoring, they often focus on analytics: dashboards, pricing logic, and decision-making tools.

However, before any of that is possible, one requirement must be solved:

consistent access to reliable competitor data

In practical terms, this means collecting:

product information

current and promotional prices

availability status

marketplace seller data

This data must be:

accurate

regularly updated

structured and consistent

Without this foundation, any downstream analysis becomes unreliable.

Why Building a Scraping System Seems Simple

Many teams start with the assumption that data collection is the easy part.

A basic prototype can often be built quickly:

send requests to product pages

extract price fields

store the results

At a small scale, this approach may appear sufficient.

This creates the impression that building a full system is just a matter of scaling up.

Where Internal Systems Become Complex

The difficulty becomes visible when moving from a prototype to a production system.

Access and Anti-Bot Protection

Most ecommerce websites actively limit automated access.

Systems must deal with:

request blocking and throttling

behavioral detection

session consistency requirements

CAPTCHA challenges

Maintaining stable access is not a one-time solution—it requires continuous adjustment.

Dynamic Websites

Modern ecommerce platforms rely heavily on dynamic content.

Product data is often rendered in the browser and may vary depending on:

session state

location

device

Reliable extraction requires more than simple requests and parsing.

Constant Changes

Websites are updated frequently.

Even small frontend changes can break data extraction logic, requiring ongoing maintenance and monitoring.

Scaling Data Collection

Collecting data for a few products is manageable.

Scaling to thousands or millions introduces additional requirements:

distributed crawling

scheduling and prioritization

failure handling

data consistency

At this stage, data collection becomes a system rather than a script.

Data Quality and Structure

Raw scraped data is not immediately usable.

It must be:

cleaned

validated

normalized

Without this step, the data cannot be reliably used in internal systems.

The Hidden Cost of Building

These challenges translate into ongoing operational effort.

Building a reliable system typically requires:

dedicated engineering resources

infrastructure for access management

continuous maintenance

monitoring and issue resolution

Over time, data collection becomes a persistent responsibility rather than a one-time project.

Importantly, this effort does not directly contribute to pricing strategy—it only enables it.

When Building Internally May Make Sense

There are cases where building your own system is justified.

For example:

highly specific or non-standard data requirements

need for full control over collection logic

existing infrastructure and expertise in scraping

data collection as part of core business capabilities

In these situations, internal investment can be aligned with long-term goals.

A Simpler Approach: Treat Data Collection as a Separate Layer

An alternative approach is to separate data collection from everything else.

Instead of building and maintaining scraping infrastructure internally, companies can rely on external data collection and focus on how they use the data.

In this model:

competitor data is collected externally

structured datasets are delivered on a regular basis

internal systems handle storage, matching, and analysis

This removes the need to manage:

anti-bot systems

crawling infrastructure

scraper maintenance

The focus shifts from how to collect data to how to use it.

What You Get Instead of Building

With this approach, your team receives ready-to-use data rather than raw scraping outputs.

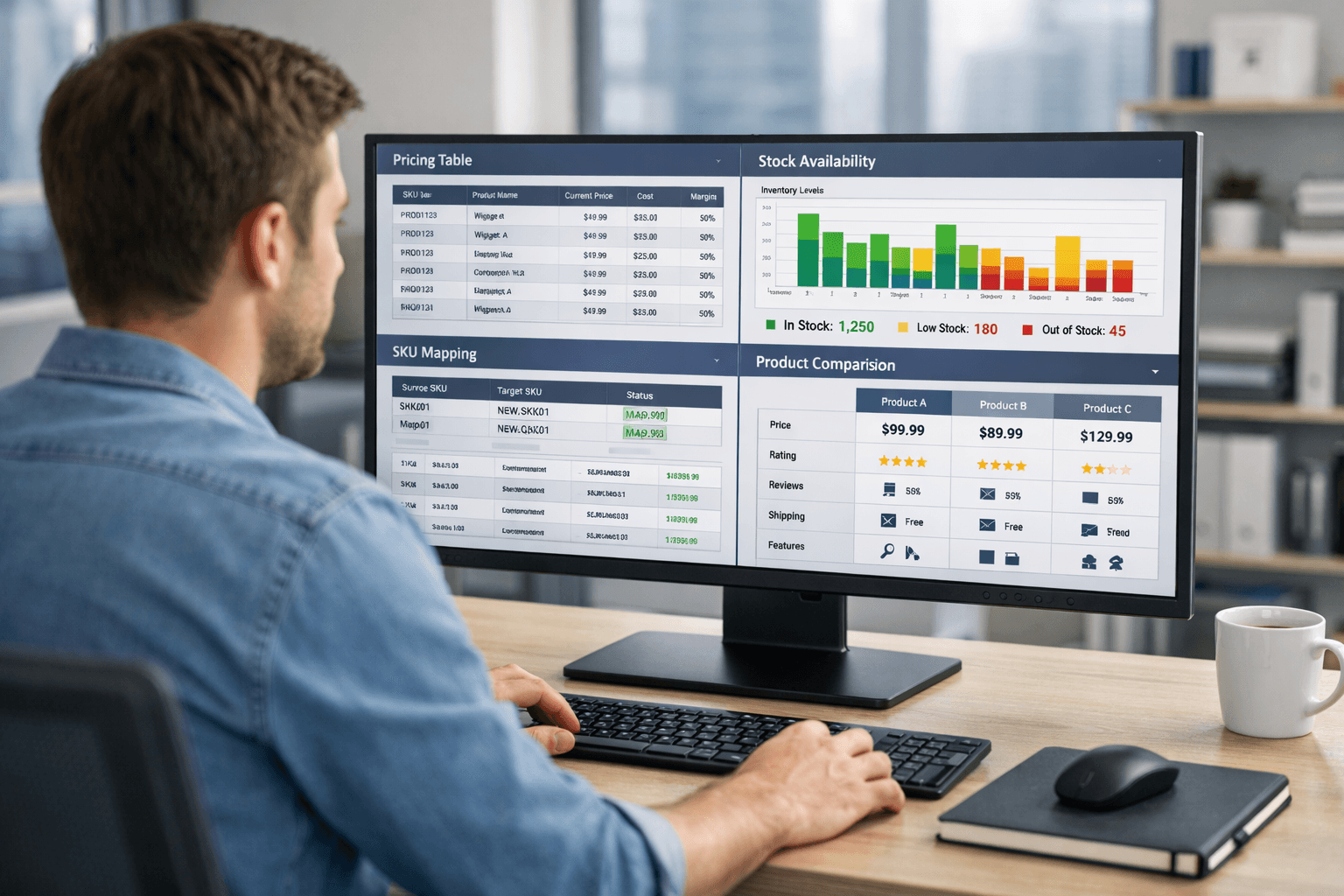

Typical datasets include:

product titles and identifiers (SKU, EAN, UPC, GTIN where available)

current and promotional prices

availability status

seller information (for marketplaces)

product attributes and metadata

The data is delivered in structured formats such as JSON, CSV, or XLSX, making it easy to integrate into existing systems.

Because the data is already cleaned and standardized, there is no need to build additional pipelines just to make it usable.

What Remains on Your Side

Separating data collection does not limit flexibility.

Your team retains full control over:

product matching and catalog alignment

internal databases and data models

pricing logic and rules

dashboards and reporting

automation and decision-making

This allows you to design pricing systems exactly as needed, without being constrained by how data is collected.

Comparing the Two Approaches

Building internally:

full control over data collection

high flexibility

significant engineering and maintenance effort

slower time to reliable data

Using external data:

immediate access to structured datasets

reduced operational overhead

predictable data delivery

no need to maintain scraping infrastructure

The decision depends on whether you want to own data collection as a technical system—or treat it as an input.

Where the Real Work Happens

It is important to distinguish between two separate problems:

collecting data

using data

Data collection is an infrastructure problem.

Pricing strategy is a business problem.

The most effective pricing teams focus their energy on the second — and treat the first as a solved problem.

Conclusion

Building a competitor price monitoring system requires solving a complex data collection problem before any analysis can take place.

While it is possible to build this infrastructure internally, it introduces ongoing complexity that many teams underestimate. For most e-commerce businesses, the engineering effort required to keep scrapers running reliably at scale is better spent on pricing logic, analytics, and decision-making.

ShopScraping handles the data collection layer — crawling, rendering, anti-bot management, normalization, and structured delivery — so your team can skip straight to using the data.

If you're evaluating whether to build or buy, see what ShopScraping delivers and decide from there.