Ecommerce Web Scraping in 2026: Why It Became a Real Engineering Problem

Focus on pricing, not scraping — a simpler way to get competitor data

By 2026, ecommerce is no longer a static catalog of products with predictable pricing. Prices change multiple times per day, search results are personalized, and even product availability depends on location, device, or user behavior.

In this environment, data is not just useful — it is operationally critical. Companies rely on it for pricing decisions, assortment planning, and competitive analysis. But collecting that data has become significantly more difficult than it used to be.

This article explains why.

Why Ecommerce Data Became So Hard to Collect

A decade ago, scraping ecommerce websites was relatively straightforward: request a page, parse the HTML, extract the fields.

That model no longer works.

Most modern ecommerce platforms are built as dynamic applications. Content is rendered in the browser, often differently for each session. The same product page can show different prices, promotions, or availability depending on:

location

device type

cookies and session history

traffic source

Search results are even less predictable. Ranking is influenced by personalization algorithms, meaning two users may see entirely different product orders.

At the same time, anti-bot systems have evolved. Instead of simply blocking suspicious requests, they analyze behavior across many signals — how a page is loaded, how a user navigates, how consistent a session appears.

As a result, scraping today is less about “downloading pages” and more about replicating realistic user behavior at scale.

What a Modern Scraping System Actually Needs

Because of these changes, reliable data collection requires more than just scripts or parsers. It becomes a system with three core responsibilities.

1. Data Collection in Dynamic Environments

Some data can still be accessed via APIs or simple HTTP requests. But many ecommerce sites rely heavily on JavaScript, requiring full browser execution.

This means systems often need to:

render pages like a real browser

interact with elements (scrolling, clicking)

manage navigation flows

extract data after dynamic content loads

In practice, efficient setups combine both approaches: using browsers where necessary, and switching to direct API calls when possible.

2. Identity and Anti-Detection Management

The hardest part of modern scraping is not extraction — it is staying undetected.

Websites evaluate requests using a wide range of signals, including network characteristics, browser configuration, and behavioral patterns. Simply rotating IP addresses is no longer sufficient.

Reliable systems simulate consistent “identities”, which include:

IP and location

browser characteristics

cookies and session state

realistic navigation behavior

They also distribute activity across regions to capture local pricing and availability differences.

At scale, this becomes a probabilistic problem: collect as much data as possible while minimizing the risk of being blocked or receiving distorted results.

3. Turning Raw Data into Usable Data

Even when data is successfully collected, it is rarely ready for analysis.

Different retailers structure information differently. Product names vary, attributes are inconsistent, and duplicates are common.

Processing pipelines typically handle:

cleaning and structuring raw data

matching identical products across sites

normalizing brands, categories, and attributes

converting currencies and units

Machine learning is increasingly used here — especially for product matching and extracting structured information from unstructured descriptions.

Without this layer, scraped data has limited practical value.

The Core Challenges in 2026

Even with proper systems in place, several challenges remain fundamental.

Anti-Bot Systems

Modern defenses often do not block aggressively. Instead, they degrade data quality — for example, by hiding products, altering rankings, or delaying responses.

This makes detection harder: the system may appear to work while returning incorrect data.

Personalization

Two identical requests can produce different results.

For analytics and pricing models, this creates a reproducibility problem. Systems must control for session state and context, or explicitly track it.

Geographic Variability

Prices, taxes, and availability differ across regions.

Accurate analysis requires precise control over:

geolocation

currency

language

store or delivery settings

Without this, comparisons become unreliable.

Constant Frontend Changes

Ecommerce sites update frequently. Small UI changes can break extraction logic.

Robust systems rely less on fragile page structure and more on stable signals such as embedded structured data or adaptive extraction strategies.

Legal and Compliance Constraints

Scraping operates within a complex regulatory environment.

Organizations need to consider:

terms of service

data usage policies

privacy regulations (e.g., GDPR)

responsible request patterns

This is increasingly part of system design, not just legal review.

What Companies Use This Data For

Despite the complexity, demand for ecommerce data continues to grow because of its direct business impact.

The most common applications include:

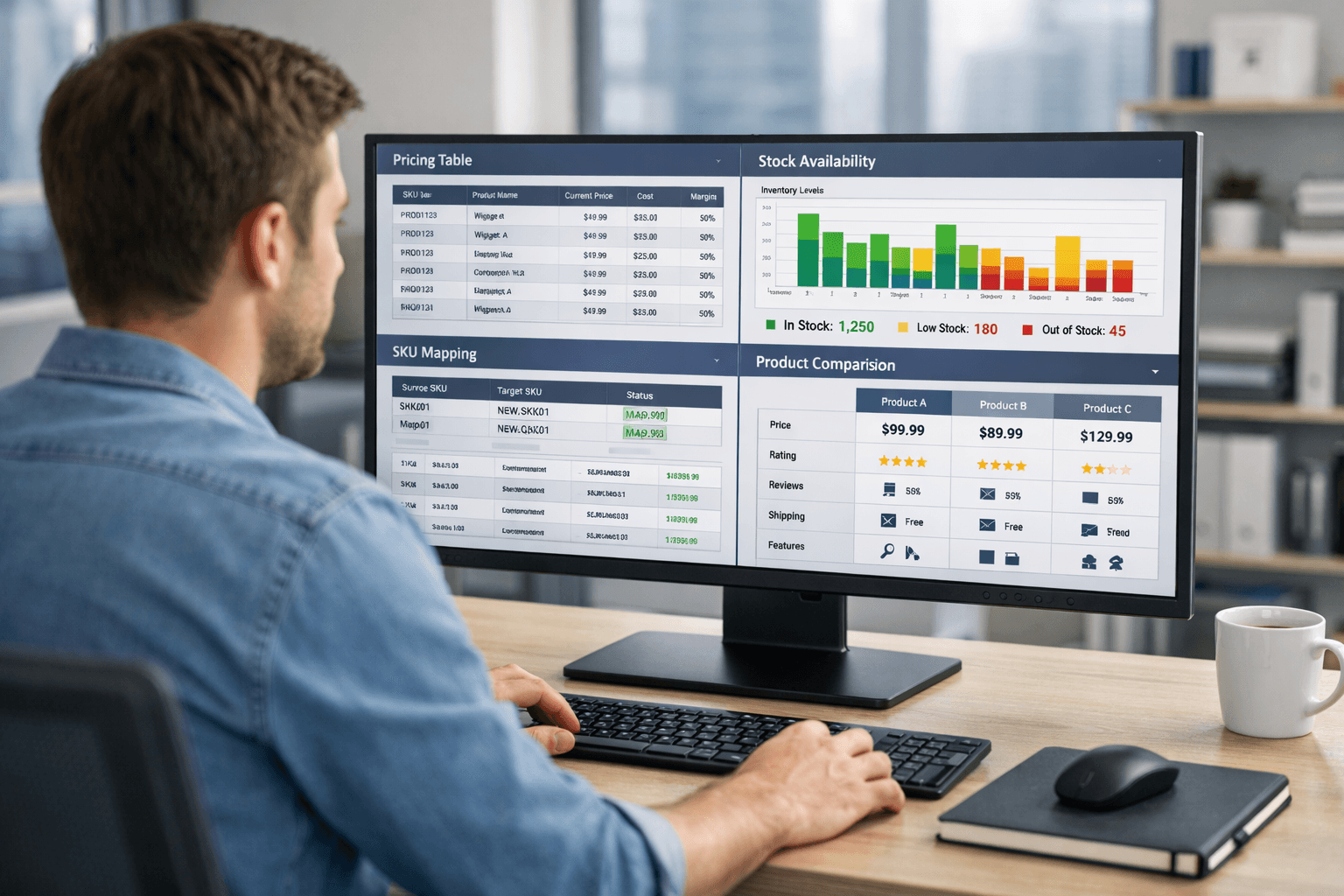

Competitive pricing

Tracking competitor prices and promotions to adjust pricing strategies.

Assortment analysis

Understanding competitor catalogs, identifying gaps, and monitoring trends.

Product matching and benchmarking

Comparing identical products across platforms to build pricing indexes and market insights.

MAP compliance

Detecting unauthorized discounting across marketplaces.

Review and sentiment analysis

Analyzing customer feedback to identify issues and opportunities.

Inventory monitoring

Tracking stock availability as a signal of demand and supply dynamics.

From Scripts to Infrastructure

One of the most important shifts is conceptual.

Scraping is no longer a collection of scripts. It behaves more like a data infrastructure layer:

distributed

continuously running

monitored and optimized

integrated into downstream systems

This shift also changes the cost structure. The main expenses are no longer development time, but ongoing operation: infrastructure, proxy networks, and data processing.

Efficiency comes from selectivity — collecting only what is needed, when it is needed.

The Direction of the Market

Several trends are becoming clear:

Anti-bot systems are increasingly driven by machine learning

Personalization continues to grow, reducing determinism

Legal frameworks are tightening, especially across regions

Automation in data extraction and processing is improving

At the same time, maintenance is becoming more automated. Systems are better at detecting failures, adapting to changes, and recovering without manual intervention.

Conclusion

Ecommerce web scraping in 2026 is no longer a simple technical task. It is a complex, evolving engineering problem shaped by dynamic frontends, anti-bot systems, and fragmented data environments.

The value of ecommerce data has increased — but so has the cost of obtaining it reliably.

Understanding this gap is essential for anyone working with pricing, analytics, or competitive intelligence in modern ecommerce.