Competitor Price Monitoring in Ecommerce: Why Data Matters More Than Scraping

Focus on pricing, not scraping — a simpler way to get competitor data

In highly competitive ecommerce markets, pricing is rarely static. Retailers continuously adjust prices in response to competitor actions, promotions, inventory levels, and demand fluctuations. In many categories, prices change multiple times per day.

To keep up, companies rely on pricing intelligence systems that track competitor prices and market conditions in near real time. These systems help identify pricing gaps, react faster to market changes, and support dynamic pricing strategies.

At a high level, the idea sounds simple: collect competitor prices and analyze them. In practice, this is where most teams run into unexpected complexity.

Why Competitor Monitoring Is Harder Than It Looks

What appears to be a straightforward task—extracting prices from websites—quickly turns into a technical challenge.

Modern ecommerce platforms are not designed to be easily scraped. They are dynamic, frequently updated, and protected by increasingly sophisticated anti-bot systems.

Teams attempting to build internal monitoring solutions typically encounter three categories of problems.

Access and anti-bot protection

Most large retailers actively detect and restrict automated access. Systems analyze request patterns, browser behavior, and session consistency. Without proper infrastructure, scrapers are blocked, throttled, or served incomplete data.

Dynamic and JavaScript-heavy content

Product data is often rendered dynamically in the browser. Extracting it reliably requires full browser execution and interaction, not just simple HTTP requests.

Constant change and maintenance

Even small frontend updates can break data extraction logic. Maintaining scrapers becomes an ongoing engineering effort rather than a one-time implementation.

As a result, competitor price monitoring is not just about data extraction—it is about building and operating a resilient data collection system.

The Core Insight: Separate Data Collection from Pricing Intelligence

One of the most important realizations in this space is that competitor monitoring consists of two fundamentally different layers:

Data acquisition — collecting pricing and product data from online stores

Pricing intelligence — analyzing that data and making decisions

Most internal projects try to solve both at once. This significantly increases complexity and slows down time to value.

In practice, these layers require very different capabilities. Data acquisition is infrastructure-heavy and operationally complex. Pricing intelligence, on the other hand, is where companies create actual business value.

Our approach focuses on separating these concerns.

Our Approach: We Deliver the Data, You Build the Intelligence

Instead of requiring your team to build and maintain scraping infrastructure, we provide ready-to-use competitor pricing data.

We handle the entire data acquisition layer:

crawling competitor websites and marketplaces

handling dynamic, JavaScript-driven pages

managing anti-bot protection and access stability

maintaining extraction logic as websites change

structuring and validating collected data

Your team receives clean, structured datasets that can be immediately used for analysis.

This allows you to focus on pricing strategy, analytics, and decision-making—rather than on the mechanics of data collection.

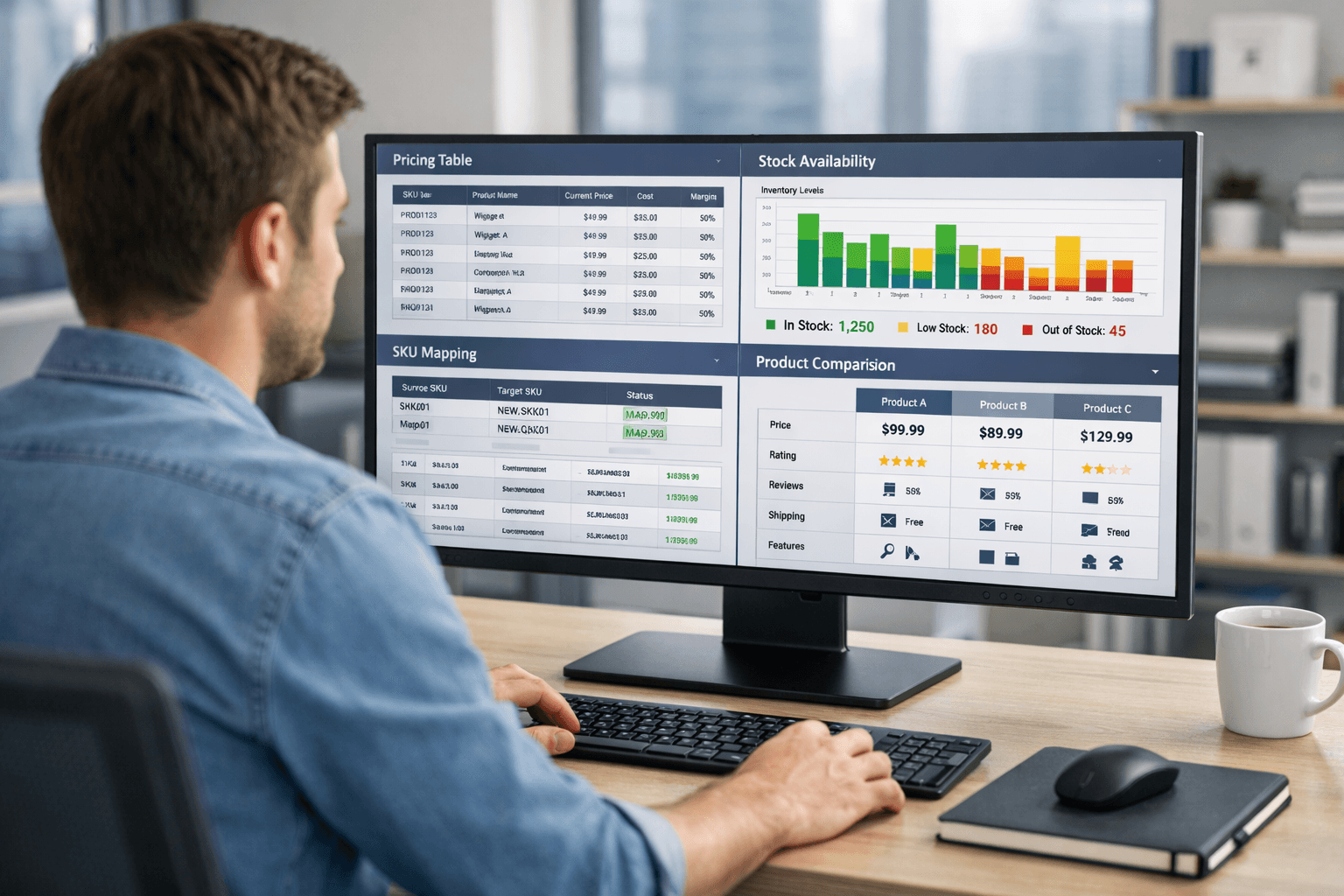

What the Data Looks Like in Practice

For competitor price monitoring to be useful, data must be consistent, structured, and reliable.

Typical datasets include:

product titles and descriptions

product identifiers (SKU, EAN, UPC, GTIN where available)

current and promotional prices

availability status

brand information

product attributes and specifications

product URLs and metadata

The data is normalized and validated before delivery, reducing the need for internal data cleaning.

It is delivered in structured formats such as CSV, XLSX, or JSON, making it easy to integrate into existing analytics or pricing systems.

Supporting Product Matching and Catalog Alignment

A key challenge in pricing intelligence is mapping competitor products to your own catalog.

Different retailers describe the same product differently. Identifiers may be missing, naming conventions vary, and attributes are inconsistent.

Structured product data significantly simplifies this process. With consistent attributes and identifiers, companies can implement matching logic based on:

standardized product codes (EAN, UPC)

brand and model information

attribute similarity

internal matching models

This makes cross-retailer comparison more reliable and reduces manual effort.

Flexible Data Updates Based on Market Needs

Pricing data is only useful if it reflects current market conditions.

Different use cases require different update frequencies. While some categories benefit from more frequent updates, in many cases daily or periodic refreshes are sufficient to support pricing analysis and decision-making.

Our infrastructure supports flexible update schedules aligned with business needs, including:

daily updates for ongoing competitor monitoring

weekly updates for trend tracking and reporting

monthly datasets for long-term analysis and benchmarking

custom schedules based on specific use cases

Because data collection is handled externally, adjusting update frequency does not add engineering overhead on your side.

How Companies Use This Data

Once integrated into internal systems, competitor pricing data becomes the foundation for pricing intelligence.

Common applications include:

Competitive price monitoring

Tracking how your prices compare to competitors across products and categories.

Price index analysis

Measuring your market position over time and identifying pricing trends.

Promotion tracking

Detecting competitor discounts and campaigns as they appear.

Dynamic pricing systems

Feeding competitor data into pricing algorithms that adjust prices based on market conditions.

Market and category insights

Analyzing long-term trends, assortment strategies, and competitive behavior.

These capabilities enable companies to move from reactive pricing to more systematic and data-driven strategies.

Why Many Companies Avoid Building Scraping Infrastructure

While building an internal scraping system may seem attractive initially, the operational reality is often underestimated.

A reliable system typically requires:

dedicated engineering resources

proxy and access infrastructure

anti-bot handling strategies

continuous maintenance and monitoring

ongoing adaptation to website changes

Over time, this becomes a significant operational burden that does not directly contribute to business differentiation.

By separating data acquisition from analytics, companies can focus their resources on pricing models, strategy, and decision-making.

Conclusion

Competitor price monitoring is no longer a simple data collection task. It requires reliable access to dynamic, frequently changing, and protected ecommerce environments.

The key distinction is between collecting data and using it.

While pricing intelligence drives business value, data acquisition is a complex infrastructure problem on its own.

By delivering structured, reliable competitor pricing data, we remove that complexity—allowing teams to focus on what actually matters: building effective pricing strategies based on accurate market data.